|

You can access this spark context UI till your spark shell is open.Storage Memory it took maximum 434.4 MB.When cluster created we did not pass number of thread so it took default number as 8 based on my laptop hardware resource available.JVM is a combination of driver and executer. We are not seeing separate executer process, because we are in local cluster.Check Event Timeline Spark started and executed driver process.To monitor and investigate your spark application you can check spark context web UI.

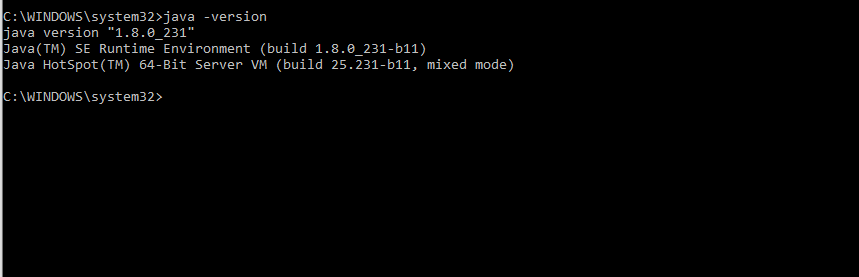

□ option("multiline","true") is important if you have JSON with multiple lines formated by prettier or any other formatterĪnalyzing Spark Jobs using Spark Context Web UI in your MAC laptop json ( "/Users/rupeshti/workdir/git-box/learning-apache-spark/src/test.json" ) df.show () # read and display json file with multiline formated json like I have in my example df = ( "multiline", "true" ). You will learn about spark shell, local cluster, driver, executor and Spark Context UI. Running small program with PySpark Shell in your MAC laptop Now run pyspark to see the spark shell in python. Step 4: Running PySpark shell in your MAC laptop Next setup pyspark_python environment variable to point python3:Īlso put this script on your startup command file.In order to check type python3 on your terminal. If you already have python3 then ignore this step. Now you can start the spark shell type spark-shell.Place this path in your startup script as well.Here is the command to update $path export PATH=$PATH:$SPARK_HOME/bin.Next add the spark home bin path in your default $PATH.Open new terminal and check the spark home path.zshrc, Press “i” to edit, Press escape then :wq to save the file Set the Spark_home path to point to the spark3 folderĮxport SPARK_HOME=~/spark3/spark-3.2.1-bin-hadoop3.2.Sudo tar -zxvf spark-3.2.1-bin-hadoop3.2.tgz Move the tar file in spark3 folder (newly created).Go to apache spark site and download the latest version.Install JAVA steps on your mac machine Step 2: Install Spark on your MAC You will able to install spark and also run spark shell and pyspark shell on your mac. Working with spark-submit on EMR cluster.Step 3: Running PySpark on Notebook on EMR cluster at AWS cloud using Zeppelin.Step 2: Running PySpark on EMR cluster in AWS cloud using Spark-Shell.

Step 1: Creating EMR cluster at AWS cloud.Installing Multi-Node Spark Cluster in AWS Cloud.Step 3 connecting spark with notebook shell.Step 1: Setting environment variable and starting notebook.Running Jupyter Notebook ( In local cluster and client mode ) in your MAC laptop.Analyzing Spark Jobs using Spark Context Web UI in your MAC laptop.Running small program with PySpark Shell in your MAC laptop.Step 4: Running PySpark shell in your MAC laptop.Install & Run Spark on your MAC machine & AWS Cloud step by step guide.Install & Run Spark on your MAC machine & AWS Cloud step by step guide Learn Apache spark by doing hands on View on GitHub Install & Run Spark on your MAC machine & AWS Cloud step by step guide

Install & Run Spark on your MAC machine & AWS Cloud step by step guide | Skip to the content.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed